Load Testing a Virtual Web Application

Measuring the Performance Impact of Virtualizing a Web Application Server

Christopher L Merrill

©2007 Web Performance, Inc; April 2nd, 2007; v1.2

UPDATE 2

A VMware blogger responded to this report in this post. This reply from the author attempts to clarify some of the points and explain why some of the suggested changes don't match the goal of the tests.

UPDATE

It would appear that a number of factors have sparked some controversy and mis-interpretation of our test results. I'll try to address the big ones:

- The title of the slashdot posting is misleading. It stated a 43% drop in performance. Performance is a broad term that means different things to different people. Since I did not make the slashdot posting, I can not correct it, but I will point out that the conclusion in the report (if anyone read that far) is more explicit - it stated a 43% drop in total capacity, where it should be clear from the rest of the report that total capacity is the total number of users that can support the application while meeting the performance criteria. If you examine the charts you will see that average page duration is essentially equal between the two environments up to 300 simultaneous users.

- Production environment – One of the most frequent comments we heard was "No sane organization would use the free server product (VMWare Server 1.0.1) on a production server - it's only for evaluation purposes." First, it is quite true that VMWare recommends the ESX product line for production use and no doubt has significantly better performance. If your application is performance sensitive, the you should be seriously considering the ESX product. Second, I must say that this comment is exceptionally naive. Many organizations ARE using the free VMWare Server product for production use, us included! For those organizations, putting a number on the performance impact is valuable. And I should point out that our public web server, which gracefully survived the slashdotting with barely a hiccup, is running on the very same free version, along with a number of other hosted VMs.

- When will you test VMWare ESX? Good question. Unfortunately I don't have a good answer.

- Virtualization is bad. At least, some readers interpreted the article as a statement of such. On the contrary, virtualization is wonderful! We actually think these results are quite impressive, especially considering the $0 software cost and the ease of maintenance it affords.

- You guys got a beef with VMWare? On the contrary, we love VMWare. We have been customers since their very first product was introduced. As I said earlier, our public web server runs on VMWare and we use it for in-house systems as well as in our test lab.

Introduction

Virtualization is hot. Over the past few months, it would be difficult to pick an IT magazine out of my stack that does not have an article on Virtualization. Even in our small company, we have two VMware servers. This allowed us to reduce 9 underutilized servers down to two physical machines. Because the original severs were severely underutilized, the virtualized servers actually perform better (running on newer hardware). They are easier to manage - especially for backups. We have reduced the risk of configuration changes, software installs and upgrades by taking snapshots before these procedures. What's not to like?

Many organizations are implementing virtualization strategies for these reasons (and many others). But what is the impact of a virtualized server when the server is under heavy usage? What happens when the server is pushed to the limits of it's performance?

These questions are asked many times every day. Numerous reports have been published on the performance impact of virtualization - they are usually comparisons of which VM products are faster or focus on a specific area of performance, such as CPU, disk or network performance (see the resources section at the bottom). But for a manager considering the virtualization of IT systems, the question of performance combines all of these things and more. Modern web applications are complex beasts - and the performance of the individual subsystems is frequently not a good indicator of the performance of the entire system.

The purpose of this article is to explore the performance impact of virtualization on a web application that uses typical development methodologies. The goal is to give administrators some idea of the performance impact that virtualization will have on their applications as they become loaded - and how it is different from the native servers. For our reference application, we will use the ASP.NET Issue Tracker System, part of the Microsoft ASP.NET Starter Kit. While this sample application is relatively simple, it makes use of one of the most common development frameworks and the code is typical of many larger IT web applications.

We ran 4 load tests on the application. The first measured the performance of the web application running on a native Windows 2003 Server installation. The second test measured the performance of the web application running on a virtual Windows 2003 Server installation running within VMware Server 1.0.1. VMware ran on Linux (CentOS 4.4). The base hardware for both machines is a Dell Poweredge SC1420 with dual Xeon 2.8GHz processors. The virtualized server has the same memory available to it (2G) as the native server (which implies that the physical machine running VMware has more memory). Since the Intel Xeon processor supports hyperthreading, there was some debate as to the impact of hyperthreading on the virtualized machine. So we decided to run two additional tests with hyperthreading disabled for both the native and virtualized servers.

The tests were performed using our load testing software Web Performance Load Tester™ 3.3. For details about the construction of the testcases and an introduction to load testing .NET web applications, see our Load Testing 101 article.

Test Description

First, a big caveat: This is a relatively simplistic test. Any enterprise IT web application is likely to be far more complex. These results should not be interpreted as a universal law - but rather a single data point to keep in mind when making order-of-magnitude estimates. If your application is mission-critical, then you should be doing your own load tests to assess the performance impact. After completing this test, a large list of unanswered questions came immediately to mind - see our list at the end of this article.

We used two testcases (user scenarios) for this test. The first simulated users performing queries on the system. The second simulated users entering new issues into the system. This exercised disk I/O, both reading and writing. The rendering of the pages by the application exercised CPU and memory utilization and the network subsystem was exercised by the requests and responses between our load engines and the web server. Note that graphical performance was not be measured by this test, since we were testing the performance impact on a server application.

The software (Load Tester™) collects a large number of measurements during a load test - Hits/sec, Bytes/Sec, min/max/avg duration for every page and transaction, etc. But the number that is really important to managers and administrators is the number of users the system can effectively support. One of the items in the Load Tester™ report is a User Capacity estimation. This estimate comes from an examination of the average page duration and error rate as the number of simultaneous users increases. When these parameters exceed the desired thresholds, the system is considered to be overloaded. The User Capacity is the primary indicator of system performance for the purpose of this report. The thresholds used for this report are 6 second page duration and 0% error rate. This means that when average page duration exceeds 6 seconds or any errors are detected, the system is considered to have reached the capacity limit.

Results

In each of the tests, the performance degradation was evident in the same way. As each server configuration neared it's capacity limits, the response time started to degrade and the total bytes/sec transferred and cases/min completed reached a plateau. Shortly after this point, the application started returning error pages.

Physical machine

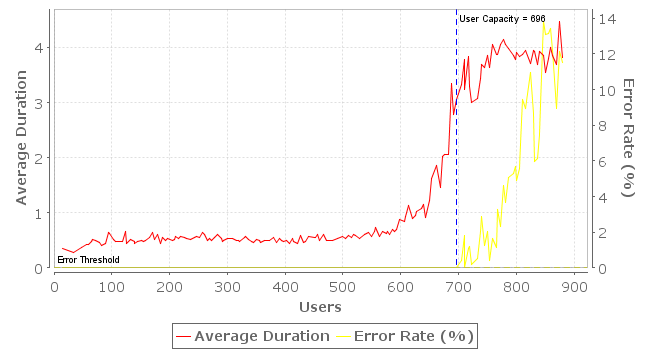

First we examined the performance of the physical machine. Under these conditions, the application had an estimated capacity of 696 simultaneous users.

Virtualized machine

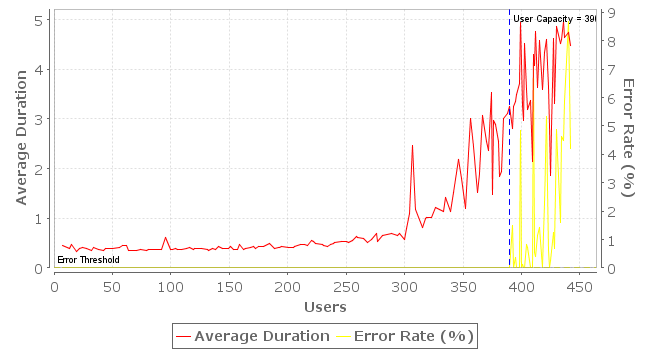

Virtualization clearly takes a toll on peak performance. On the virtualized server, the application had an estimated capacity of 390 simultaneous users.

Physical machine (hyperthreading disabled)

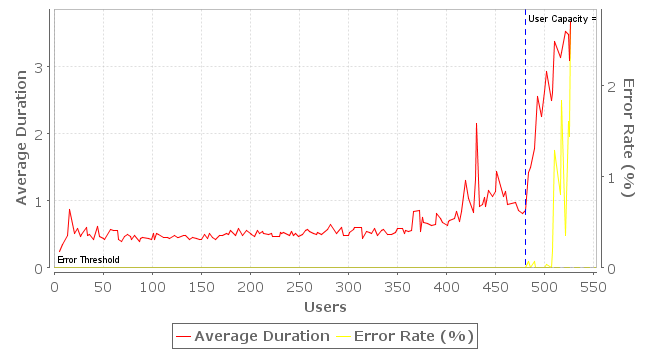

For anyone who doubts the benefits of hyperthreading in the real world, the proof is evident. Disabling the feature reduced the estimated capacity of the physical machine to 481 simultaneous users.

Virtualized machine (hyperthreading disabled)

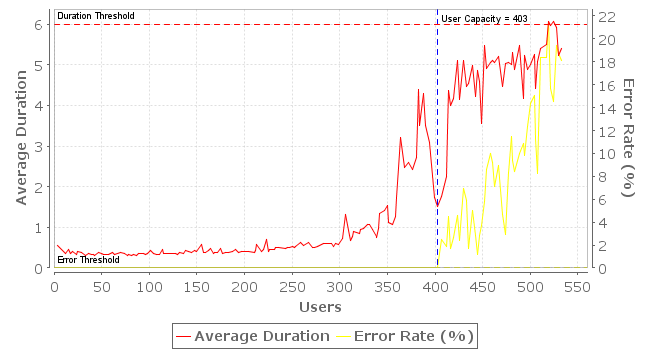

Disabling hyperthreading had negligible effect on the virtualized machine. In our test, the capacity increased a tiny amount to 403 simultaneous users. The difference between this result and the virtualized machine with hyperthreading enabled, however, is smaller than the margin of error for these tests -- more testing would be required before concluding the performance was better in the virtualized machine with hyperthreading disabled.

More detailed reports are available for each test:

Conclusion

These results indicate that a virtualized server running a typical web application may experience a 43% loss of total capacity when compared to a native server running on equivalent hardware.

It should be noted that no tuning was performed on the native server, the virtualized server or the VMs host machine. Administrators with the right tuning techniques will likely improve the performance of one or all of these configurations.

Unanswered Questions

- How much could the performance be equalized with expert tuning?

- Would a different VM platform exhibit a significantly different result?

- Would running a different OS on either the host or client impact the performance change?

- Would virtualizing only the web application server or the database server show a different performance change?

Resources

Below are some of the reports we found while researching virtualization performance. While they do no answer the performance questions we think are important to IT managers and administrators, we feel than can provides some additional useful information.

- University of Cambridge VM Performance Test

- VirtualPC 2004 vs. VMWare 4: Part II

- Virtual machine shootout: VMware vs. Virtual PC

Feedback & Comments

Comments about this report may be posted at the company blog post.

Version History

v1.0 - 1st public release (25 jan 2007)

v1.1 - updated 2 apr 2007

v1.2 - email cleanup (23 Jan 09)